“There is broad consensus in the scientific community that wildfire behavior is changing across the American West in general, and in California in particular. … All indicators shown—wildfire occurrence, total area burned, and average fire size—display an upward trend in the last several decades across the state.” The Costs of Wildfire in California, California Council on Science and Technology, 2020

California has a habit of going up in flames on a yearly basis, leaving cyberpunk-worthy scenes as a result. The devastation carries a visceral impact, with heart-wrenching scenes of lives and families torn apart amid the physical and economic damage.

Despite the prevalence of this story in our cultural zeitgeist, however, the complexity of wildfires—their causes, their contributing and moderating factors, and the data itself—leaves vast room for snap judgements but no clear-cut answers to critical questions. There is a systematic and comprehensive lack of good-quality information in general about what’s going on with wildfires, including the cause of many of them—this is a refrain throughout the report cited at the beginning of this article. Ideally there would be a central website containing easy-to-access datasets, predictive models, incident reports, budgets, and so forth, adequately sourced and updated. In the absence of that, I examined all the available data, cross-checking sources against one another, seeking the most credible information, and creating my own datasets when none existed.

In this article I’ll conduct a data-driven examination of the critical questions: How bad are California wildfires compared to previous decades and centuries? What causes them, and how much are humans to blame? Where does climate change come in? Is forest management a major contributing factor? And what steps can be taken to minimize damage?

Let’s start with what’s really happening. While it may seem like a given that wildfires are getting worse, it’s worthwhile to begin our exploration by testing our basic assumptions, and in doing so we find a story that is more nuanced than it might first appear.

Are we sure it’s worse?

Wildfire research generally looks at two separate but related sets of annual data points—total number of wildfires, and total acres burned. Focusing attention on total acres burned is probably more useful for exploring what can be done about the large, out-of-control wildfires that do the most damage.

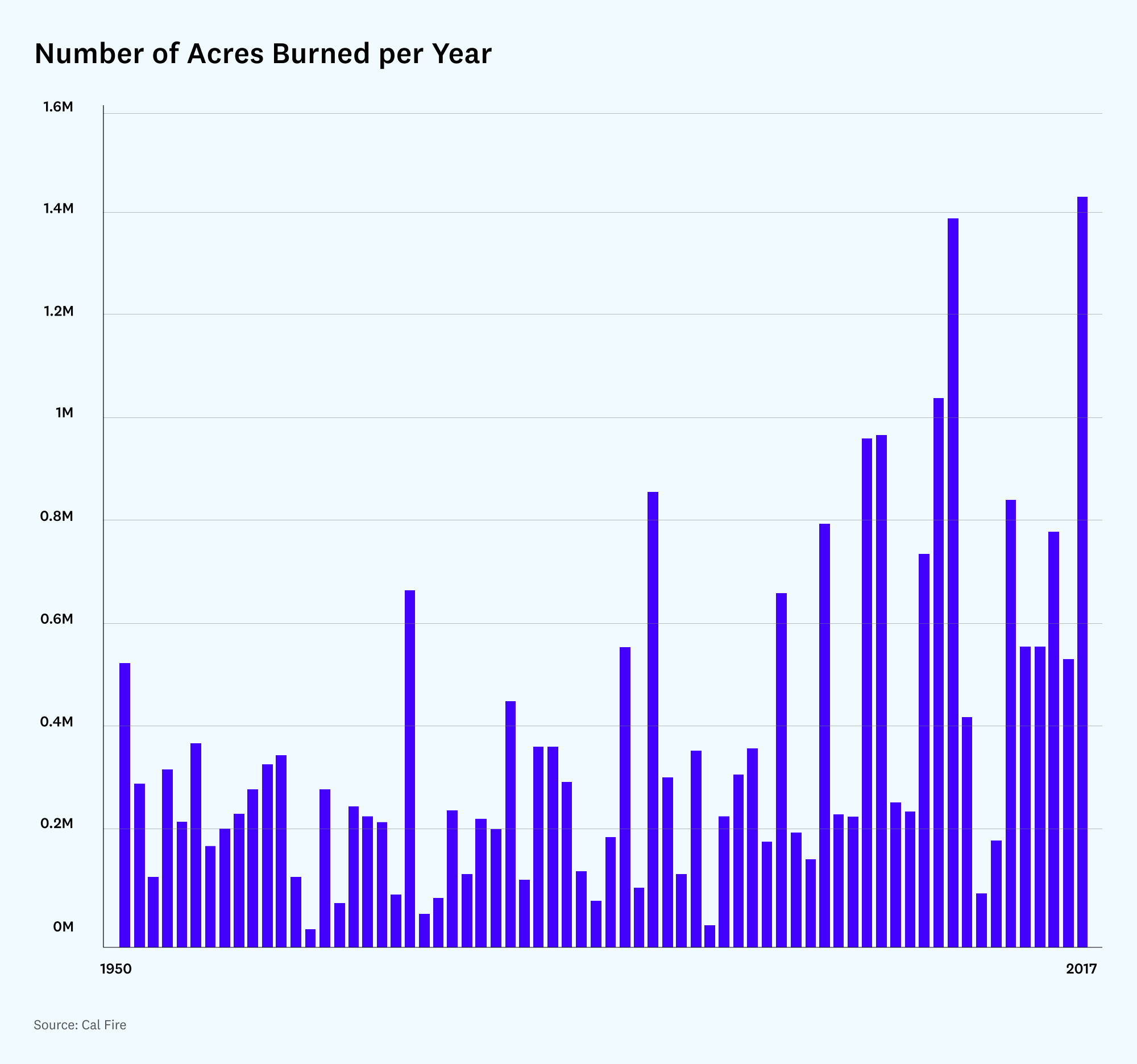

When we examine data from the California Department of Forestry and Fire Protection (Cal Fire) for the years 1950-2017, we note a peculiarity—the rate of acres burned is highly variable from year to year. In any given year, it is far from certain that one would observe a particularly bad year.

Despite this annual variability, however, it seems clear that, in the last seventy years, there is an increasing trend in total acres burned per year. The worst five years in the period covered by this graph, for example, all have occurred since 2002.

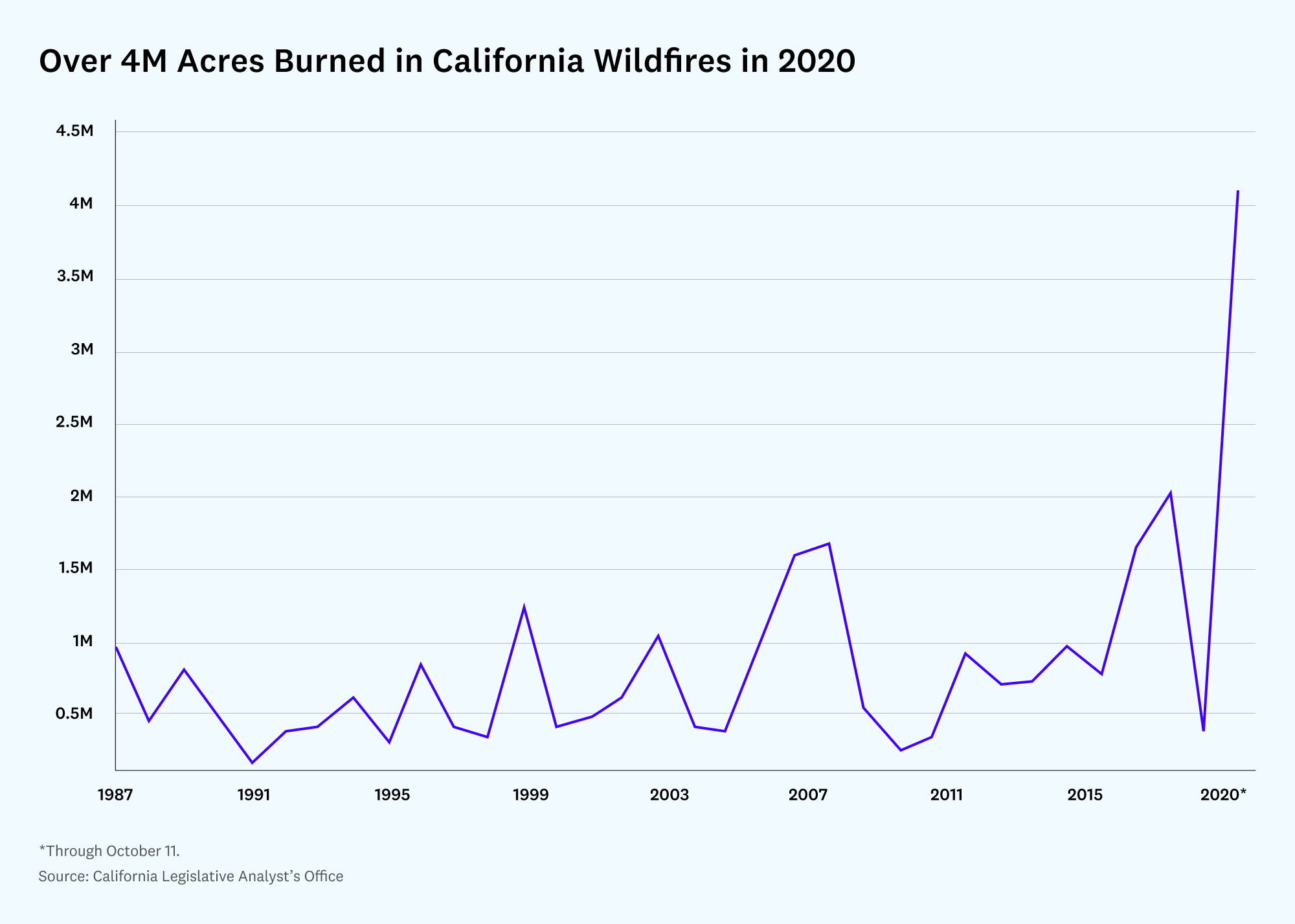

More recent data show that 2020 was the worst year of all.

Taken together, this data — derived from satellite imagery, airborne cameras, and written records — seems to make clear that wildfires became increasingly more destructive in the years 1950-2020.

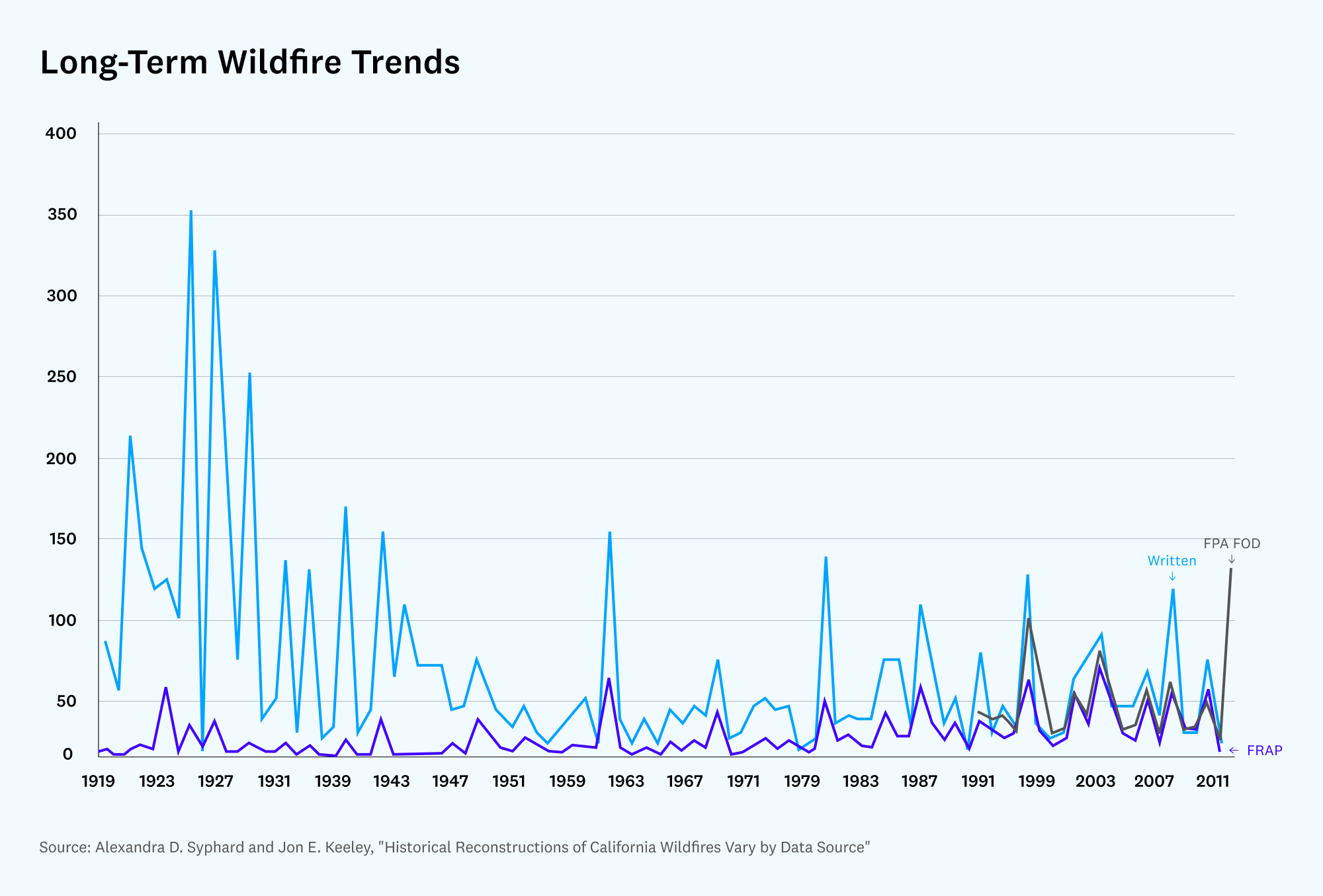

But in gauging the longer-term trend of what’s really happening with the fires, it’s necessary to go back much further. Data derived from written records from Cal Fire and the U.S. Forest Service dating back to 1919 show that wildfires, far from increasing, have actually declined over the last 100 years. And in fact the website of the National Interagency Fire Center previously noted that fires were at their very worst a century ago. (See data, research, and methodology for this article.)

The same trend can be seen in a review of three separate long-term wildfire data sources, which show that wildfires declined from 1919 to about 1996 and have ticked upward since then, but not to the levels of a century ago:

The data on the overall, century-long trend suggest that most of the 20th century represented an unusually low amount of fire, and what we’re seeing now is a return to the “normal” levels of fire of the early 1900s.

It may also complicate the conventional narrative that increased wildfires in the past two decades are the result of climate change, since the data suggest the damage done by wildfires is now less than that of a century ago.

Is it then possible that government mismanagement (another competing theory) is the central driver of wildfire damage, and not climate change? We’ll examine both of these possibilities.

Neither climate change nor government mismanagement “cause” wildfires, however—they can only increase the likelihood that they will be destructive. First, something must spark a fire.

What causes wildfires?

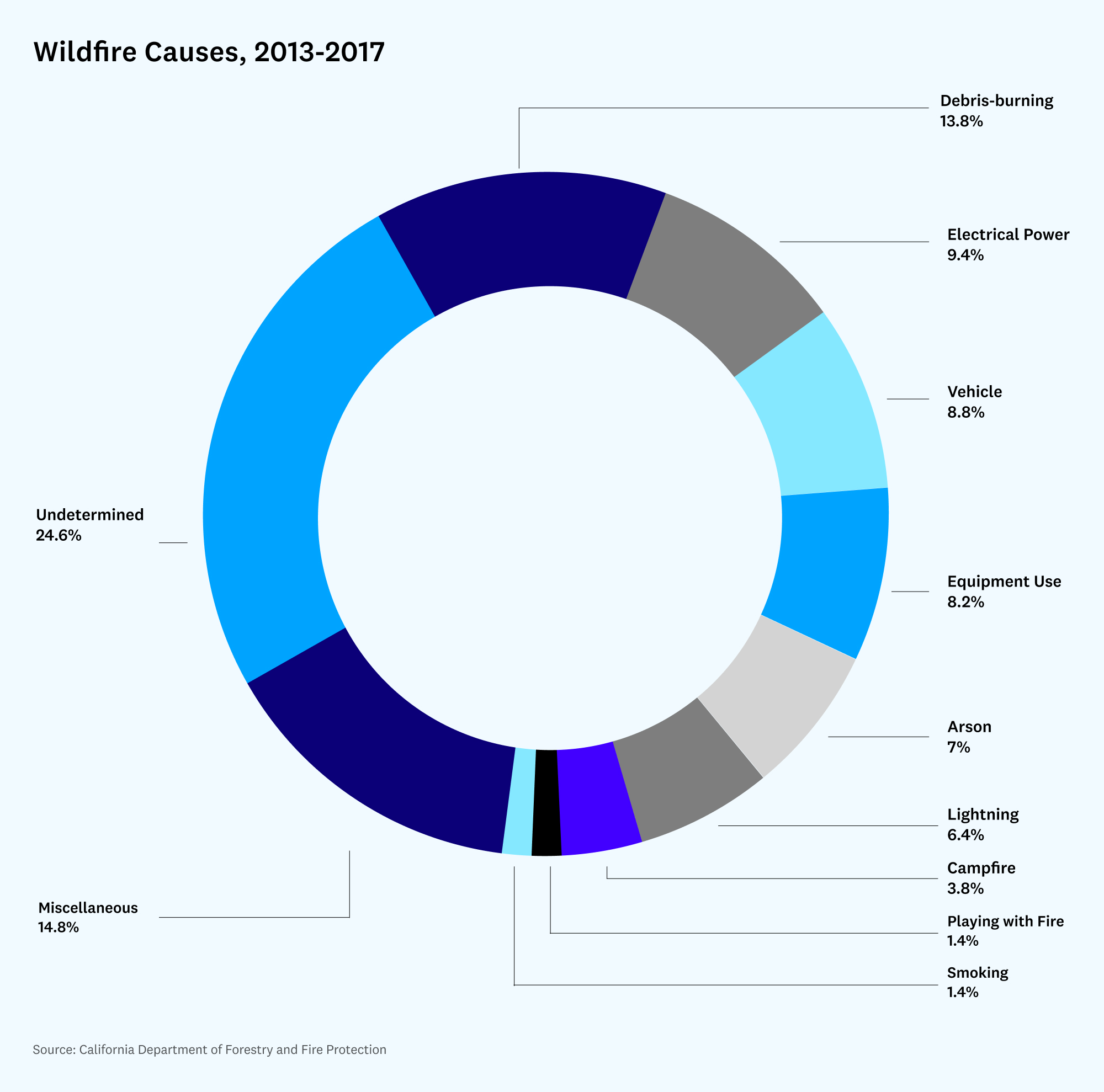

Most fires are small in area and do little damage; what matters is not necessarily what starts the most fires, but rather what leads to the most damage. Still, starting with the causes can lead us toward some possible ideas to minimize damage.

What we care about are causes, weighted by seriousness. Debris-burning, at 13.8% of fires started, is not considered a major cause of major fires. Fires due to miscellaneous causes, however, which make up nearly 15% of fires, can be very damaging—they represent a long tail of potential causes of fire that are hard to control. In the Carr fire, for instance, a flat tire caused a car’s wheel rim to scrape against the asphalt, creating sparks that set off the fire. In the Valley fire, a wire at a poorly connected hot tub overheated, melted, and ignited dry brush at a nearby home. In the Mendocino fire, one of the fires that merged to form it, the Ranch Fire, was started by a rancher who had inadvertently sparked dry grass by hammering a metal stake while trying to find a wasp nest.

When focusing on prevention, however, such random triggers are nearly impossible to eliminate.

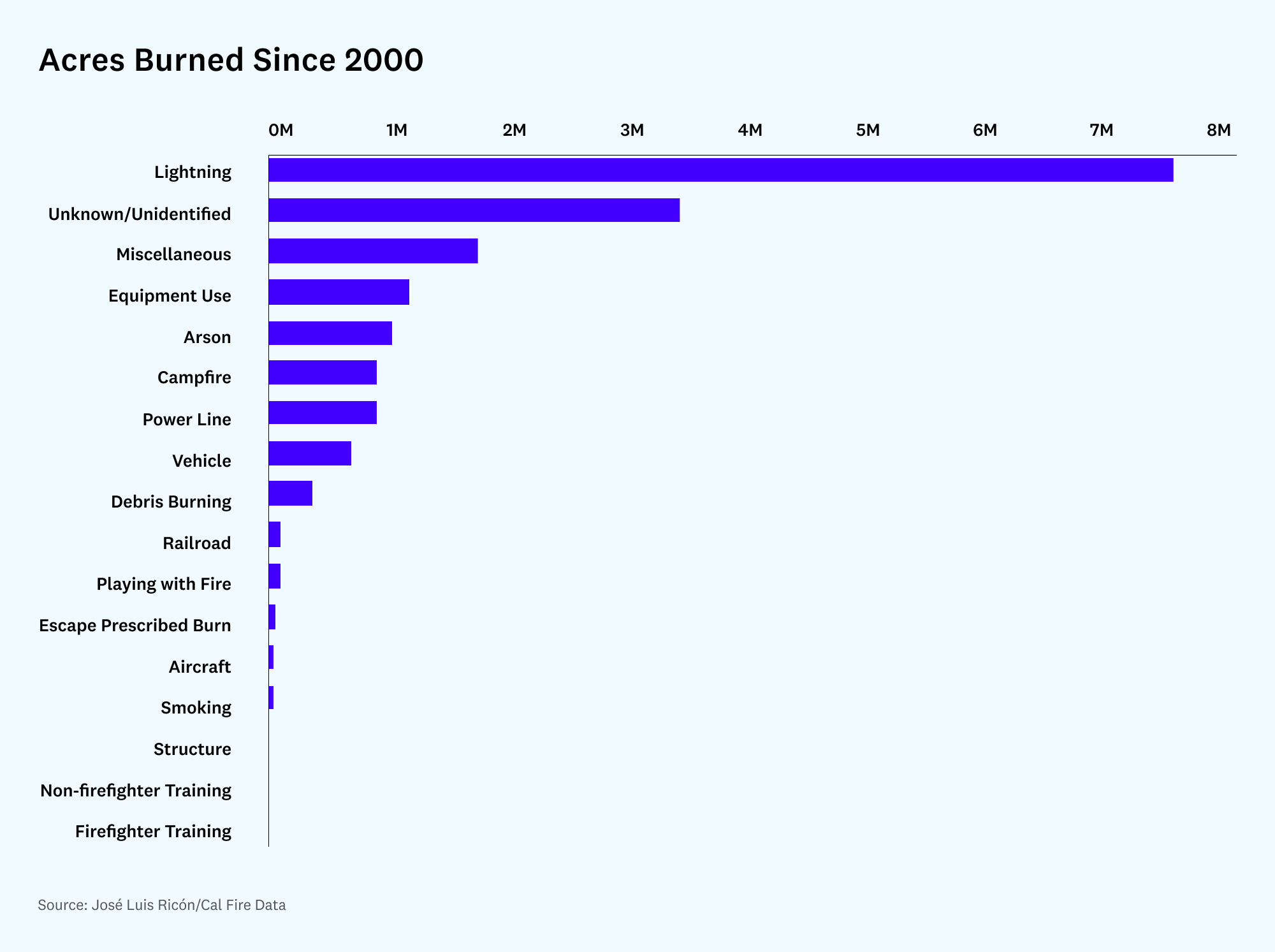

Power lines are the next most frequent cause of fires, and the fires they cause are destructive indeed. According to Cal Fire, 6 out of the top 20 most destructive fires since 1991 (by structures burned and deaths) were due to faulty power lines. However, power lines are still fairly low on the list when looking at overall acres burned since 2000. (I created this Github repository for supporting data.)

Lightning strikes, though only causing 6.4% of fires, have caused the most fire damage in recent years:

Lightning storms were responsible for the aggregated 38 fires that comprised the August Fire Complex, which burned almost a quarter of the entire surface area that burned in 2020, despite those 38 fires being just 0.38% of the 9,917 fires reported that year. Lightning also caused the rest of the mega fires of 2020—the SCU Lightning Complex, the LNU Lightning Complex, the North Complex, and the SQF Lightning Complex.

This raises the question: Are we seeing more lightning, or is the same amount of lightning striking drier forests, leading to more fire?

It turns out that the amount of lightning in California remained constant from 1986-2000, suggesting that it may be drier forests, rather than more lightning, that is leading to greater damage from lightning strikes. This is not to rule out that lightning strikes are trending up specifically in the 2000-2021 period, for which no data is freely available.

So in examining the data, it seems that unfortunately not much can be done about some fires, even some quite significant ones, that have random, human or mechanical causes. And not much can be done about lightning strikes. Faulty power lines, however, are addressable, and we’ll focus on them later. We’ll also examine the data on climate change.

But first, let’s look closer at humans’ role in fires—and ways in which people are affected.

How significant are human causes (and impacts)?

Trying to disentangle the culpability of humans in wildfires is difficult—lightning is a discrete event, whereas human factors include fire management practices, urban construction, roads, or power line management. Mann et al. (2016) tried to disentangle these relative contributions and found that even after controlling away the effect of climate change, human activity has still contributed to more fire on a net basis than non-human factors.

I took data from the Fire Perimeters database from Cal Fire and plotted the area burned, the number of fires, and the causes, from 2000 to the present day. (The notebook used to generate the data below is available at the Open Nintil repository.)

In these two maps (Southern California, Northern California), red represents human-started fires. Blue represents fires of unknown cause. Green represents fires due to natural (e.g., lightning) causes:

From analyzing this dataset, it’s clear there is a trend toward more area burned and more fires, as well as toward an increase in the largest fires. Whereas 30% of the fires were responsible for 90% of the area burned in a given year in the 1940-2020 period, now less than 10% of the fires (recently, less than 25 fires in a given year) account for most of the area burned.

Geographically, almost every fire in the Los Angeles area is due to human causes. In Northern California most of the fire tends to be due to lightning, and around the Bay Area there’s a combination of both. There’s also a surprisingly high percentage of fires whose causes are unknown, even for large fires like the 2020 Creek Fire.

The dataset also reveals the natural effects of wildfires: What has burned does not burn again (at least not for about 10 years after the original fire). In some areas, trees are not coming back, with the terrain being converted to chaparral. In turn, chaparral is being converted to grasslands and sage scrub.

Measuring the deeper health impacts

It’s worth noting that acreage should not be the sole metric when assessing wildfire damage (as suggested here); measuring acres burned, structures burned, or even lives lost still likely underestimates the true costs of wildfires. One would have to add the economic costs of individual wildfire mitigation, the quality-of-life costs associated with people being unable to enjoy the relevant natural areas, and the potentially massive health costs from millions of people breathing polluted air. There is work attempting to quantify the impact of wildfire-produced air pollution on health; Wang et al. (2021) estimate that the California wildfires of 2018 alone, via increases in mortality risk, caused the death of 3,652 people, which is 35 times more than the lives lost directly due to the fires.

This kind of indirect damage due to pollutants is not always considered in cost-benefit analyses of the wildfire equation. The National Fire Protection Association, which is often quoted as an authority for costs of wildfires, does not include health-related costs in its calculations. Neither does the Wikipedia cost calculation figure: the 2018 wildfires article cites a $26.35 billion loss, whereas the Wang et al. paper, adding in health and indirect (counterfactual loss of economic activity) losses, gives a total of $148.5 billion.

It’s easier to notice property damage compared to long-term health impacts, and assigning a value to rebuilding a building is easier than modeling the cost of particulate matter getting into people’s lungs. Fortunately, this is starting to be acknowledged. California’s Wildfire and Forest Resilience and Action plan has a section on reducing the health effects of smoke (p. 34). The Costs of Wildfire in California report considers health effects as well.

Conditions for more damaging fires

All of our analysis so far has focused on what triggers individual wildfires. Now let’s delve into the conditions that make wildfires more or less damaging. Simply put: Drier, denser vegetation provides more “fuel” and creates conditions for more damaging fires that are harder to control.

Broadly speaking, there are two ways vegetation can become drier and denser: climate change—either anthropogenic (human-made) or natural variation—or land management policies that increase risk of fire.

Skeptics of anthropogenic climate change as the main driver of increased wildfire damage point to data that focuses on the high fire prevalence in the more distant past. Before 1800, the average annual acreage burned was 1.8 million hectares, or around 4.4 million acres (Stephens et al., 2007). This is equal to 2020, the worst year ever in recent history, supporting the argument that California is simply seeing a return to normal in recent years, and not a 21st-century phenomenon driven by anthropogenic climate change.

This is a position held by some (see some examples here, here, and especially here), who point to other factors driving the recent increase in fires, such as the firefighting policy of not allowing any fire in the environment, which leads to the accumulation of fuels, and larger fires years later. The recent uptick in fires, according to this argument, is a result of a “fire deficit”—an anomalously low amount of burns that preceded the current era, the product of a century of aggressively fighting fires, which led to overgrown forests, which led to more fuel for fires to spread.

But the issue is a bit more complicated than that. Even leaving anthropogenic climate change aside, climate has natural oscillation; Keeley & Syphard (2019), find that in the short term at least, higher rainfall in one year leads to more fires the next due to more vegetation growth. Indeed the wettest year ever in California (2016-2017) was followed by intense fires (2018).

These periods of higher or lower rainfall may be driven by natural oscillations in the climate, like the Pacific Decadal Oscillation or the related Atlantic Multidecadal Oscillation (AMO). The same is true for temperature: Kitzberger et al. (2007) noted that a current warming trend in the AMO suggested that we may expect an increase in widespread, synchronous fires across the western U.S. in coming decades (more temperature, more fires), which is precisely what happened in the years after the publication of the paper.

If one wants to see how much of the trend is attributable to climate change, one must model those oscillations and determine how much they affected the aridity of the fuels present in western U.S forests (while controlling away the impact of human activities like forest management). Most such analyses, such as Abatzoglou & Williams (2016), use data from 1950 onwards, a time period that covers a chunk of cold and warm Pacific Decadal Oscillations (though ideally we’d like to see a model that reconstructs the entire observed dataset of fires).

What the authors find is that half of the increase in aridity of the fuels present in western forests, including those in California, was due to anthropogenic climate change, with the rest being natural variation (possibly the oscillations described earlier).

Even this analysis, which adds a layer of nuance to the “is climate change to blame” question, focuses on the direct impacts of anthropogenic climate change on fuel aridity and does not address several other pathways by which it may have affected wildfire activity (either in a positive or negative direction), according to the authors.

But it does make sense that a more arid California would tend to burn more. And measuring whether California is indeed becoming drier is easier: there is data going back more than 100 years, which should cancel out periodic oscillations. The data show that there remains a trend toward dryness.

Based on the above, it appears that climate change is acting in the expected direction: California is getting warmer and drier—and these two factors, by virtue of the thermodynamics of combustion, will lead, other things being equal, to more fire in the future—even if land management policies were gold standard.

Land management policies

Wildfires, left unchecked, would burn until there was no more fuel to burn. Of course, this is not ideal—unchecked wildfires would generate major pollution, loss of life, and loss of property. So it seems natural to want to suppress every single fire. Fire is scary and hard to control.

Two competing fire-management philosophies have existed in an uneasy balance for most of a century in California. The set of activities that have as a goal extinguishing fires is called fire suppression—this is traditional, old-fashioned firefighting. Put out fires as they occur (though not always, for example in cases where allowing them to burn out is seen as reasonable, efficient, and safe), and conduct prescribed burns occasionally to thin out vegetation and decrease the chances of fires in the future.

Fire exclusion, on the other hand, means a zero-tolerance policy toward fires, including not allowing fires to burn (wildfire management) or starting new fires (prescribed burns).

But this is one of those Talebian cases where systems require some volatility to stay more stable than otherwise—better to allow smaller periodic shake-ups than eventual eruptions. It is now commonly agreed that fire exclusion allows for too much fuel to accumulate, leading to larger and more devastating fires. Counterintuitively, too much firefighting leads to more fire.

It took awhile for the state of California to come around to this way of thinking. After a series of large fires in 1910 the U.S. decided it had gotten enough of that and aimed to prevent any and all fires from ever happening. In 1935 a policy was instituted such that every fire should be suppressed by 10 a.m. the day following its initial report.

The state was not unaware that fire exclusion inevitably increases hazard by encouraging undergrowth. Before embarking on a fire exclusion policy, the early Forest Service commissioned studies about whether or not to engage in prescribed burns—controlled burns that clear out growth and make landscapes less hospitable to out-of-control wildfires. After years of debate, the state decided against prescribed burns. Any fire was deemed too dangerous.

In 1970 a reversal of these policies started, and now the consensus position is that prescribed burns can be a valuable tool.

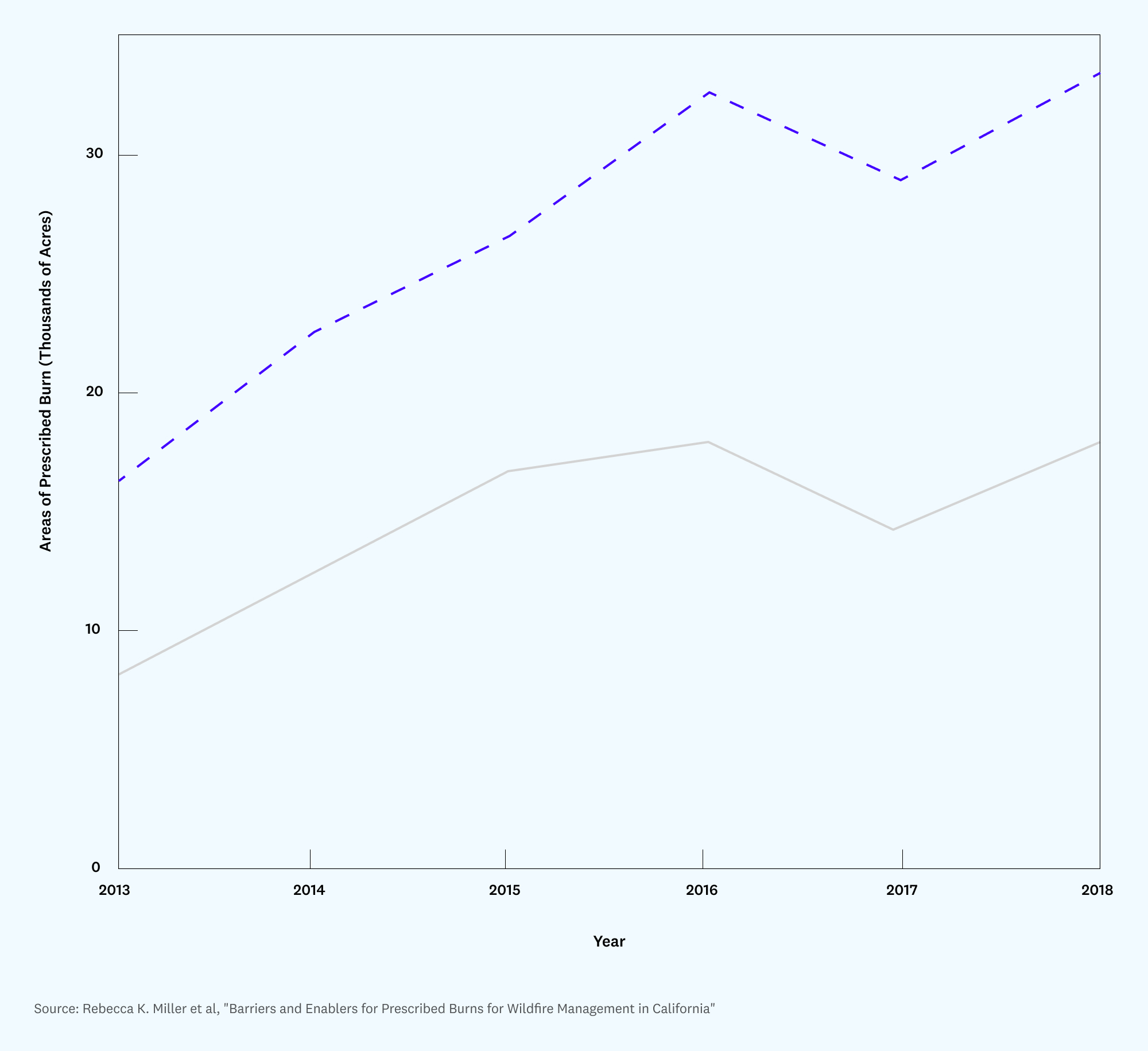

Despite this, California continues to struggle carrying out a consistent fire suppression policy, falling short of its own goals (Miller et al, 2020). See the figure below, where the dashed line is planned burn, and the solid line is actual:

Even the prescribed burn goals (just over 30,000 acres for 2018, in the above chart) are far short of what would be required to reduce future wildfire damage: The California Department of Forestry and Fire Protection estimated in 2010 that 20 million acres would require treatment in order to reduce the likelihood of catastrophic fires. Even if the state were to increase the yearly burn to 100,000 acres, it would take 200 years to get all the burning done that state fire officials believe is necessary. The number Miller et al. actually call for is 1.01 million acres a year, which is substantial, around 20% of the total surface that burned in the 2020 wildfires.

So what’s preventing California from reaching that target? Miller et al. asked a range of stakeholders, and many of them pointed to political and bureaucratic constraints. In areas managed by Cal Fire, for example, it is illegal to let fires burn—the agency is required to extinguish all unplanned fires. (On federal lands, it is legal to let wildfires burn if deemed necessary. In the Sierra Nevada, the U.S. National Park Service has been leveraging wildfires for treatment to great effect, to the point where the area treated by managed wildfire is similar to the area undergoing prescribed burn in the years 1968-2017.)

Although heralded as the future of fuel treatments by most interviewees, it remains unlikely that managed wildfire will be used on state and private land in the near future due to legal responsibilities and the need for cross-jurisdictional agreement. Interviewees therefore underscored the importance of expanding prescribed burns, particularly on non-federal lands, to achieve the ecological benefits of fire. Even this presents political and legal challenges, though: If a prescribed burn escapes (which happens about 2% of the time), there are substantial legal and financial penalties imposed on the burner. This was addressed by SB 1260, which exempts agencies from liability if the burn meets certain safety criteria. In federal lands, meanwhile, managers that use prescribed burns don’t receive any reward or praise, but are personally liable if their fires escape. This disincentivizes them from using this tool as much as they could.

Beyond policy, there are procedural and coordination issues that hamper effective fire prevention, the interviewees said. The “burn windows” allowed by the California Air Resources Board (CARB), for example, may be too narrow. While CARB argues that its burn days go unused, landowners say CARB is too restrictive. There may be a mismatch here between the availability of teams to do the burns and when CARB allows them. In addition, environmental regulations like NEPA and CEQA impose a heavy burden on justifying the burns, decreasing their use. If the approved burn window is missed, landowners (whether private owners or public agencies) have to reapply for approval.

These misaligned incentives and regulatory issues contribute heavily to what’s known as the firefighting trap—the situation where fighting fires in the short term, at the expense of disregarding prevention and preparation for future fires, yields an increasing amount of fire.

In any given year, a firefighting agency has to allocate resources between fighting fires and preventing them. Ideally an agency would model the expected result of each management practice, calculate how it affects fire hazard over time, and allocate resources accordingly.

This is not how agencies operate in reality. Cal Fire has no choice but to extinguish any and all fires under its area of responsibility regardless of any other consideration, the only real constraint being firefighting crew availability. Crews that work on prevention also work on suppression, and with a lengthening fire season, crews that would otherwise be preparing for the next season are fighting the current one. Breaking this cycle of self-defeating firefighting practices would involve potentially politically hazardous actions.

So far, I’ve presented a range of evidence looking at various factors individually. Ideally we could examine a single piece of work that explicitly models fire exclusion and climate change. There are only a handful of papers trying to consider those effects jointly. Hanan et al. (2021) examine two different locations (Johnson Creek and Trail Creek) in Idaho using the most detailed model of coupled hydrology-fire-ecology-climate that I’ve seen so far (RHESSys-WMFire).

So is it fire exclusion or climate change? The answer is … it’s complicated. For a given particular area, fire exclusion can both worsen and, surprisingly, improve the wildfire situation. The same can be true for climate change—while increasing aridity makes for more flammable fuels, at some point of increased aridity (and for a given vegetation type), the growth rate of new vegetation is stunted, resulting in fewer fires.

Could it then be that the “fire deficit” that the state experienced for much of the last century is actually caused by climate change, rather than fire exclusion? This is very unlikely: If California as a whole were at the point where the vegetation-reducing effect of aridity overwhelmed the fire-inducing effect, we would see an inverse correlation between aridity and fire in the last few decades; but the Abatzoglou et al. papers cited above show otherwise: Right now, more aridity means more fire.

While it’s difficult to pin down the exact effects of both climate change and fire management practices (and damage from wildfires is likely to be the result of a combination of these factors), the warmer temperatures in California, driven in the long term by anthropogenic climate change, are unquestionably contributors to increased wildfires.

Another often-cited factor in wildfire trends is a substantial increase in housing construction in the “wildland-urban interface” (WUI). More people living near wildland areas means more roads (vehicles are a nontrivial source of ignitions), more power lines, more campfires (easier to go camping when nature is close to home), and more accidents that can lead to fire in general.

The WUI, which is popular among homeowners for its quiet, idyllic settings, features repeatedly in the California wildfires discourse. First, new construction at the WUI has contributed to a rapid increase in damage caused to human life and property (it’s hard to have wildfires in treeless San Francisco), as urban dwellers are increasingly being pushed out of cities due to rising housing prices. This also increases the chances that human activity will cause fires. Second, as a prescriptive measure resulting from the above diagnosis, there are calls for moving people out of the WUI. A few of the fires I mentioned earlier started here: the faulty hot tub that started the Valley Fire is an example of fire emanating from the WUI. I’m skeptical, however, about attempts to reduce the human presence in the WUI before trying other solutions. WUIs are great places to live, and depriving people of the opportunity to make their homes in pleasant, more affordable places as a way to reduce wildfires should be seen as a false choice: progress should mean not having to make such tradeoffs. We can effectively fight fires and have populations living in the WUI.

***

California wildfires present a complex challenge, owing to the many interrelated factors that contribute to them, and we still don’t know how to perfectly weigh each of them, complicating the search for solutions.

But there is plenty we do know. Burned acres per year in California are increasing, relative to 1950. Despite this trend, and the physical, economic, and emotional damage that we witness annually, the quantity of fire is not unseen in the history of California: In both the early 20th century and pre-1800 there were more acres burned. Still, the current level of fire is predicted to continue to increase, though some years will see very little fire and others will see 2020-scale mega fires.

In terms of prevention, there are no answers that are both easy and obvious. The immediate cause of most fires is attributable to humans, but in many cases the igniting incidents are random and hard to completely eliminate, and in any event, humans do not cause most large fires. Lightning strikes continue to play a significant role in igniting fires and should not be disregarded.

Beyond immediate causes, focusing on the conditions under which fires are most likely to occur and do serious damage is challenging. It’s clear that the practice of zero-tolerance fire management, or fire exclusion, led to a buildup of fuel throughout California. This was compounded by a particularly wet period leading to strong plant growth, as well as rising temperatures and greater aridity due to climate change.

Even in the absence of climate change, we would still expect to see more fire than we saw in the 1950-1980 period; that is, the graphs typically used to point to the effects of climate change tend to overstate its magnitude and understate natural variation and human firefighting practices. That said, even if California were to engage in a more aggressive policy of prescribed burns, increasing temperatures would lead to progressively more fire, beyond what may be desirable to the state.

Ultimately, the right question to ask is not quite whether the driver is climate change or fire exclusion policies. The right question to ask is what to do moving forward, especially accounting for various political and social constraints. Even without climate change, fire exclusion, or weather oscillations, a lot of California is going to naturally burn. The question then becomes: How much of California should burn (and when), given what Californians care about?

Potential paths to progress

So what do we do next? There is no one silver bullet against wildfires. A healthier relationship with fire will require a multifactorial approach. Instead of trying to aim for zero wildfires, we should aim to manage fires and reduce their impact on human activity.

Reducing carbon emissions is a crucial step to reducing wildfires in the future. The role of climate change in wildfires may be overstated at times, but there is little doubt that it is an important contributor to the increasing heat and aridity of the state. But since that is somewhat outside the scope of this essay, here are other important and more specific actions that should form the bulk of our response, and how technologists and others can focus their efforts:

Ease air quality regulations, including for prescribed fires. Temporary worsening on a schedule (so the affected population can prepare) is better than multi-month bad air quality.

Maintain power lines or move them underground. Electrical power causes many of the most devastating human-caused fires. Currently the way to deal with this is to shut off power to the lines, leading to blackouts in the affected areas. But this doesn’t always work, as it requires making the decision to cut power and this is not always the route

taken. A better solution (that reduces fire risk and ensures permanent supply) is to underground the lines. It is already the default in many countries and while expensive, the costs could be cheaper than the fires that overground lines lead to. This is easier said than done: California has given some money to state utility PG&E to do this and yet the company is using the money for something else. Undergrounding may be expensive and require fighting (this is a thing — there’s a woodpole lobby.)

Enable Cal Fire to leverage wildfires for treatment purposes. Most of the land in need of treatment is federally owned, so state responses may have limited impact. But it’s important to note that the state of California is far behind its own, likely inadequate, goals of fire treatment. Breaking the self-defeating firefighting trap would require actions such as modifying Cal Fire’s charter to allow the agency not to intervene in wildfires, or even not to intervene by default unless fire is predicted to cross a certain threshold of expected damage. Savings from this policy would allow prevention activities to take place more often. More realistically, Cal Fire should have a ring-fenced budget for fire suppression and another for fire prevention, but even then, is it politically acceptable to have some crews working unmolested on prevention projects while other crews are desperate for reinforcements miles away, trying to save lives? The temptation to shift resources to suppression activities is always there.

Coordinate efforts among firefighting agencies. There is a disconnect between various action plans and roadmaps detailing measures being implemented, and what is actually happening on the ground. The ideas are in the right documents, but they are not being translated into actions. It’s not enough to declare that some actions will be taken, the institutional machinery to actually make them happen has to be there.

Use market-oriented solutions in the WUI. California is preventing market mechanisms from fully operating to reduce construction in highly fire-prone areas. For example, higher insurance premiums would dissuade people from moving there, and, in a more extreme case worth exploring, could shift the responsibility of paying for causing the damage from the state to the insurers and, through them, to the potential culprits, the same way it is done for nuclear reactors or cars. Mandatory third-party insurance, perhaps baked into location-dependent property taxes for wildfires, could be a solution. This whole discussion of course could be extended with an analysis of what drives people to the WUI in the first place: It’s not just natural beauty, but the pursuit of affordable places to live. Fixing housing policy in the state, and reducing rents in urban areas, could mitigate the appeal of the WUI as a place to live.

Enforce “defensible space” around houses in the WUI. Current construction codes already ask for house builders to build houses in a reasonable way—clearing up vegetation around the building, or building using fire-resistant material, for example. But if you look at any WUI area, lots of people just don’t do that. This is a broader theme in California: there is a gap between instituting laws and action plans and actually getting those executed. In any case, even when complied with, one could always demand even more restrictive standards here.

Work toward early detection. We know that we can use satellites to assess how large a fire is after the fire occurs. Could we use satellites to spot fires as they happen and extinguish them before it is too late? This is a hard problem: To do that you would need high spatiotemporal resolution—high spatial resolution to pinpoint very small fires and high time resolution (sampling every few seconds) to be able to react fast enough. Commonly used satellites that generate data of relevance here are useless for early detection. With the potential exception of the recent Huanjing satellites and those launched by Planet Labs, there is no satellite that even begins to have sub-100m accuracy (Barmpoutis et al. 2000). In general, satellites that have higher spatial resolution are flying in non-geostationary orbits so they get to pass over one given spot every few minutes. Geostationary satellites are constantly monitoring one area, so they could provide real-time data, but their orbit is high. But there is a kind of satellite that has been custom built for precisely this kind of use case (detecting very small infrared signatures every few seconds): military satellites built to deliver early warnings of launches of Intercontinental Ballistic Missiles (DSP, SBIRS, and OPIR). It is not the first time that someone has called for these satellites to be used for wildfire detection; one can find such calls from 10 years ago. Giving non-military entities access to currently classified information is no trivial matter, complicating the chances for a solution here.

Explore drones and airships as firefighting tools. Fighting fires using drones that drop balls into fires to extinguish them may work for some fires under some weather conditions, for example when there is not much wind, the fire is located in the radius of action of the drone, and there is a way to detect the fire before it grows beyond a very small size. I’m not that optimistic about the use of drones to fight fires in general, though it may be an intermediate step to better solutions like using airships. Airships can potentially hold more water than the largest aerial firefighting vehicle in the world, the 747 Supertanker. Cool as it may seem, one of the companies working on these airships didn’t seem to have succeeded and their patent is now expired. But the good thing about airships is that they don’t need energy to stay aloft (one could tether them and release them as needed), and they can carry more water than the usual drones. Fighting fire fast requires a combination of early detection and timely action, which can in turn be achieved by a combination of pre-positioning resources and high-speed delivery.

Consider taking over utilities. The case has been made elsewhere that the utility that provides service in most of California (including the area most prone to fire), is not being as well managed as it should. Taking over PG&E is one of the proposals in a report on wildfires from the current state administration. One can think of this as a form of self-defense on behalf of the population of the state: It’s fine to have investor-owned utilities providing service as long as they don’t extract monopolistic rents, but if it’s indeed true that the utility service provider is exposing the state to unacceptably high levels of risk due to mismanagement, then there’s a case to be made for forcibly installing better management. This could be accomplished in many ways: from keeping the current company but forcing a reorganization to nationalization or municipalization. Whether a change in ownership would truly solve the woes of the company is of course unclear, and decisions like this should be undertaken only after careful deliberation.

***

There isn’t a single cause of the recurrent California wildfires, nor a single solution. But it’s clear that we are making them worse, and that we don’t have to. Better forest management in the short term, and reducing carbon emissions in the long term, should help ameliorate the situation. However, even with perfect forest management and complete reversal of climate change, the ecosystems of California will remain fire-prone. Fire season can definitely be shorter and tamer, but it can’t be eliminated.

See data, research, and methodology for this article.

A version of this article previously appeared on Nintil.com.

Views expressed in “posts” (including articles, podcasts, videos, and social media) are those of the individuals quoted therein and are not necessarily the views of AH Capital Management, L.L.C. (“a16z”) or its respective affiliates. Certain information contained in here has been obtained from third-party sources, including from portfolio companies of funds managed by a16z. While taken from sources believed to be reliable, a16z has not independently verified such information and makes no representations about the enduring accuracy of the information or its appropriateness for a given situation.

This content is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. You should consult your own advisers as to those matters. References to any securities or digital assets are for illustrative purposes only, and do not constitute an investment recommendation or offer to provide investment advisory services. Furthermore, this content is not directed at nor intended for use by any investors or prospective investors, and may not under any circumstances be relied upon when making a decision to invest in any fund managed by a16z. (An offering to invest in an a16z fund will be made only by the private placement memorandum, subscription agreement, and other relevant documentation of any such fund and should be read in their entirety.) Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z, and there can be no assurance that the investments will be profitable or that other investments made in the future will have similar characteristics or results. A list of investments made by funds managed by Andreessen Horowitz (excluding investments for which the issuer has not provided permission for a16z to disclose publicly as well as unannounced investments in publicly traded digital assets) is available at https://a16z.com/investments/.

Charts and graphs provided within are for informational purposes solely and should not be relied upon when making any investment decision. Past performance is not indicative of future results. The content speaks only as of the date indicated. Any projections, estimates, forecasts, targets, prospects, and/or opinions expressed in these materials are subject to change without notice and may differ or be contrary to opinions expressed by others. Please see https://a16z.com/disclosures for additional important information.